Use Raw DataAverages Lose Information

January 1, 2012Comments

We all love to average data. A friend recently said that he averaged 65 miles/hr. on his last trip, affectively hiding the many miles driven well above the speed limit to compensate for driving slowly through a construction zone and stopping to replace a flat tire. While an average is easy to use in a report or conversation, an average often (intentionally or not) hides critical information needed to understand the scope of a problem, and masks possible avenues for troubleshooting.

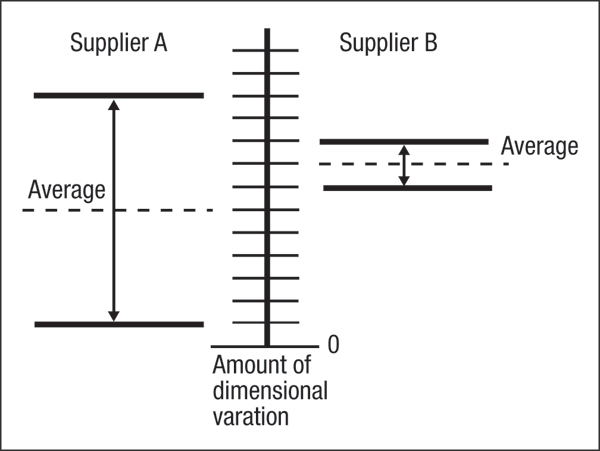

Consider two press shops supplying the same part to XY Company. The average dimensional deviation from spec for all parts made by supplier A is less than that for supplier B, giving supplier A bragging rights—until XY Company fires them. This puzzles supplier A, until seeing the raw data (in the illustration) showing that while its average deviation was lower than that for supplier B, its deviation was huge. Average deviations usually can be corrected though adjustments to the tooling or press, which would allow supplier B to make the required changes and supply parts with accurate and stable dimensions. For supplier A to do the same, it would have to conduct a detailed study to determine the source of dimensional variation and then implement major process changes, since stamping variation often results from two or more input variables.

Dimensional variation for Supplier B can be reduced by press or die modifications. Supplier A must attack process variations before lowering the average variation.

Another example of how data averages hits issues in the press shop: CAD computation of increase in lengths of line (LOL), measured at various locations along the stamping surface between die radii. Comparing this with the original LOL available to make the part generates percent stretch. Using only two measurements—final and original LOL—provides only an average of the stretch along the line. However, in most stampings the material does not stretch uniformly, and many areas will exhibit percent stretch well above and below average. Areas of high stretch could be ready to fail, and so this is the information needed for troubleshooting, not averages.

One more example: percent scrap rate, often calculated by counting the number of defective or rejected stampings tossed into a scrap bin at the end of a run. Dividing the number of scrapped parts by the total number of stamped parts made generates an average percent scrap rate for the run. Another method used is to draw a line on a marker board for each stamping scrapped, count the number of marks and divide it by the total number of stampings produced. Other companies use parts per million (PPM) as a measure of average scrap rate. Regardless of the measurement process, the average scrap rate provides little useful information for troubleshooting or process correction.